Emcee based solution#

M. Fouesneau#

This Notebook is part of an unconference session at the retreat of the MPIA Galaxy & Cosmology department in 2022. It shows how to implement a straight line fitting using MCMC and an outlier mixture model.

%%file requirements.txt

numpy

scipy

matplotlib

emcee

corner

daft

Writing requirements.txt

#@title Install packages { display-mode: "form" }

#!pip install -r requirements.txt --quiet

#@title setup notebook { display-mode: "form" }

# Loading configuration

# Don't forget that mac has this annoying configuration that leads

# to limited number of figures/files

# ulimit -n 4096 <---- osx limits to 256

# Notebook matplotlib mode

%pylab inline

# set for retina or hi-resolution displays

%config InlineBackend.figure_format='retina'

light_minimal = {

'font.family': 'serif',

'font.size': 14,

"axes.titlesize": "x-large",

"axes.labelsize": "large",

'axes.edgecolor': '#666666',

"xtick.direction": "out",

"ytick.direction": "out",

"xtick.major.size": "8",

"xtick.minor.size": "4",

"ytick.major.size": "8",

"ytick.minor.size": "4",

'xtick.labelsize': 'small',

'ytick.labelsize': 'small',

'ytick.color': '#666666',

'xtick.color': '#666666',

'xtick.top': False,

'ytick.right': False,

'axes.spines.top': False,

'axes.spines.right': False,

'image.aspect': 'auto'

}

import pylab as plt

import numpy as np

plt.style.use(light_minimal)

from corner import corner

from IPython.display import display, Markdown

from itertools import cycle

from matplotlib.colors import is_color_like

def steppify(x, y):

""" Steppify a curve (x,y). Useful for manually filling histograms """

dx = 0.5 * (x[1:] + x[:-1])

xx = np.zeros( 2 * len(dx), dtype=float)

yy = np.zeros( 2 * len(y), dtype=float)

xx[0::2], xx[1::2] = dx, dx

yy[0::2], yy[1::2] = y, y

xx = np.concatenate(([x[0] - (dx[0] - x[0])], xx, [x[-1] + (x[-1] - dx[-1])]))

return xx, yy

def fast_violin(dataset, width=0.8, bins=None, color=None, which='both',

**kwargs):

""" Make a violin plot with histograms instead of kde """

if bins is None:

a, b = np.nanmin(dataset), np.nanmax(dataset)

d = np.nanstd(dataset, axis=0)

d = np.nanmin(d[d>0])

d /= np.sqrt(dataset.shape[1])

bins = np.arange(a - 1.5 * d, b + 1.5 * d, d)

default_colors = 'C0', 'C1', 'C2', 'C3', 'C4', 'C5', 'C6', 'C7', 'C8', 'C9'

if is_color_like(color):

colors = cycle([color])

elif (color is None):

colors = cycle(default_colors)

elif len(color) != dataset.shape[1]:

colors = cycle(default_colors)

else:

colors = color

for k, color_ in zip(range(dataset.shape[1]), colors):

n, bx = np.histogram(dataset[:, k], bins=bins, **kwargs)

x_ = 0.5 * (bx[1:] + bx[:-1])

y_ = n.astype(float) / n.max()

y0_ = (k + 1) + np.zeros_like(x_)

y1_ = (k + 1) + np.zeros_like(x_)

if which in ('left', 'both'):

y0_ += - 0.5 * width * y_

if which in ('right', 'both'):

y1_ += + 0.5 * width * y_

r = plt.plot(y0_, x_, color=color_)

plt.plot(y1_, x_, color=r[0].get_color())

plt.fill_betweenx(x_, y0_, y1_,

color=r[0].get_color(),

alpha=0.5)

percs_where = np.array([0.16, 0.50, 0.84])

percs_val = np.interp(percs_where, np.cumsum(y_) / np.sum(y_), x_)

plt.plot([k + 1, k + 1],

[percs_val[0], percs_val[2]], '-', color='k')

plt.plot([k + 1],

[percs_val[1]], 'o', color='k')

%pylab is deprecated, use %matplotlib inline and import the required libraries.

Populating the interactive namespace from numpy and matplotlib

Preliminary discussion around Bayesian statistics#

As astronomers, we are interested in characterizing uncertainties as much as the point-estimate in our analyses. This means we want to infer the distribution of possible/plausible parameter values.

In the Bayesian context, the Bayes’ theorem, also called chain rule of conditional probabilities, expresses how a degree of belief (prior), expressed as a probability, should rationally change to account for the availability of related evidence.

where \(A\) and \(B\) are ensembles of events. (\(P(B) \neq 0\)).

\(P(A\mid B)\) the probability of event \(A\) occurring given that an event \(B\) already occured. It is also called the posterior probability of \(A\) given \(B\).

\(P(B\mid A)\) is the probability of event \(B\) occurring given that \(A\) already occured. It is also called the likelihood of A given a fixed B.

\(P(A)\) and \(P(B)\) are the probabilities of observing \(A\) and \(B\) independently of the other one. They are known as the marginal probabilities or prior probabilities.

We note that in this equation we can swap \(A\) and \(B\). The Bayesian framework only brings interpretations. One can see it as a knowledge refinement: given what I know about \(A\) (e.g, gravity, stellar evolution, chemistry) what can we learn with new events \(B\).

A note about the proof: The equation derives from a simple observation (geometry/ensemble theory).

The figure below gives the visual support

#@title Baye's theorem figure { display-mode: "form" }

from matplotlib.patches import Ellipse, Rectangle

ax = plt.subplot(111)

patches = [

Ellipse((-1, 0), 2, 3, angle=30, facecolor='0.5', edgecolor='k', alpha=0.1),

Ellipse((-1, 0), 2, 3, angle=30, facecolor='None', edgecolor='k'),

Ellipse((1, 0), 3, 2, angle=15, facecolor='0.5', edgecolor='k', alpha=0.1),

Ellipse((1, 0), 3, 2, angle=15, facecolor='None', edgecolor='k'),

Rectangle((-3, -3), 6, 6, facecolor='None', edgecolor='k')

]

for patch in patches:

ax.add_patch(patch)

plt.text(-2.8, 2.8, r'$\Omega$', va='top', ha='left', color='k')

plt.text(-1, 0, 'A', va='top', ha='left', color='k')

plt.text(1, 0, 'B', va='top', ha='left', color='k')

plt.annotate(r'A$\cap$B', (-0.2, 0.),

xycoords='data',

arrowprops=dict(facecolor='black', arrowstyle="->"),

horizontalalignment='center', verticalalignment='bottom',

xytext=(0.5, 1.5))

plt.xlim(-3, 3)

plt.ylim(-3, 3)

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

For any event \(x\) from \(\Omega\) $\( \begin{eqnarray} P(x\in A \cap B) & = P(x\in A \mid x \in B) \cdot P(x\in B)\\ & = P(x\in B \mid x \in A) \cdot P(x\in A) \end{eqnarray} \)\( which we can write in a more compact way as \)\( \begin{eqnarray} P(A, B \mid \Omega) & = P(A \mid B, \Omega) \cdot P(B \mid \Omega)\\ & = P(B \mid A, \Omega) \cdot P(A \mid \Omega)\\ \end{eqnarray} \)\( Note that, we explicitly noted \)\Omega$ but it is commonly omitted. The final equation of the Bayes’ theorem derives from the rearanging the terms of the right hand-side equalities.

The equation itself is most commonly attributed to reverand Thomas Bayes in 1763, but also to Pierre-Simon de Laplace. However, the current interpretation and usage significantly expanded from its initial formulation.

Straight line problem#

In astronomy, we commonly have to fit a model to data. A very typical example is the fit of a straight line to a set of points in a two-dimensional plane. Astronomers are very good at finding a representation or a transformation of their data in which they can identify linear correlations (e.g., plotting in log-log space, action-angles for orbits, etc). Consequently, a polynomial remains a “line” in a more complicated representation space.

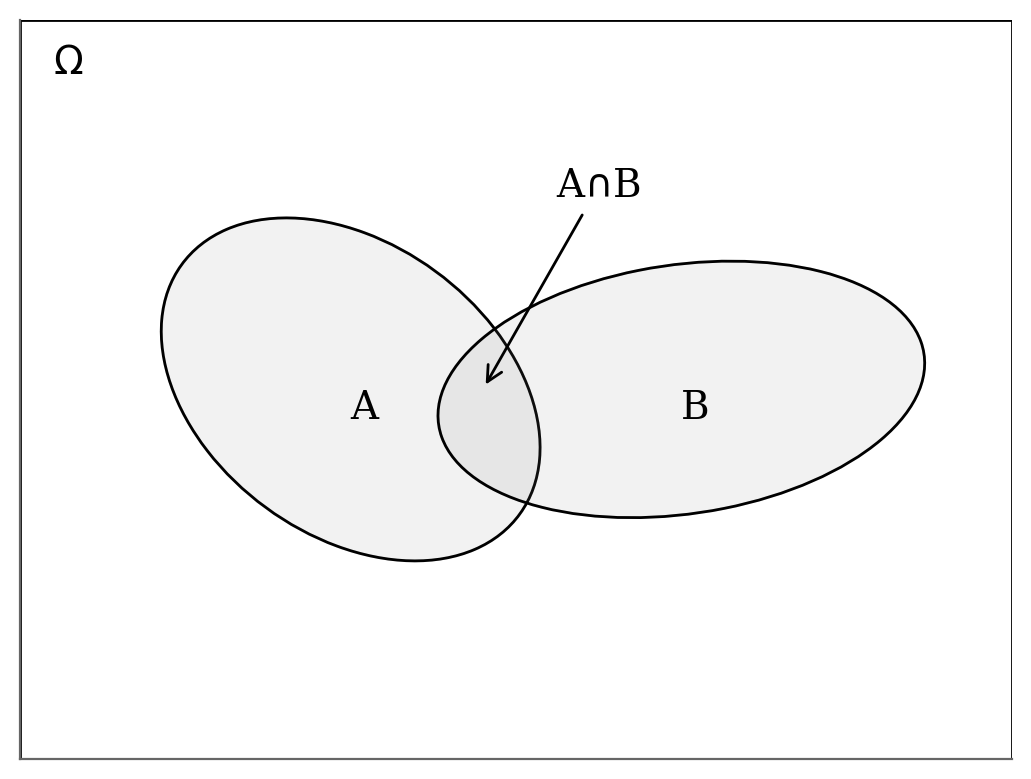

In this exercise, we consider a dataset with uncertainties along one axis only for simplicity, i.e. uncertainties along x negligible. These conditions are rarely met in practice but the principles remain similar. However, we also explore the outlier problem: bad data are very common in astronomy.

We emphasize the importance of having a “generative model” for the data, even an approximate one. Once there is a generative model, we have a direct computation of the likelihood of the parameters or the posterior probability distribution.

Problem definition#

We will fit a linear model to a dataset \(\{(x_i, y_i, \sigma_i)\}\)

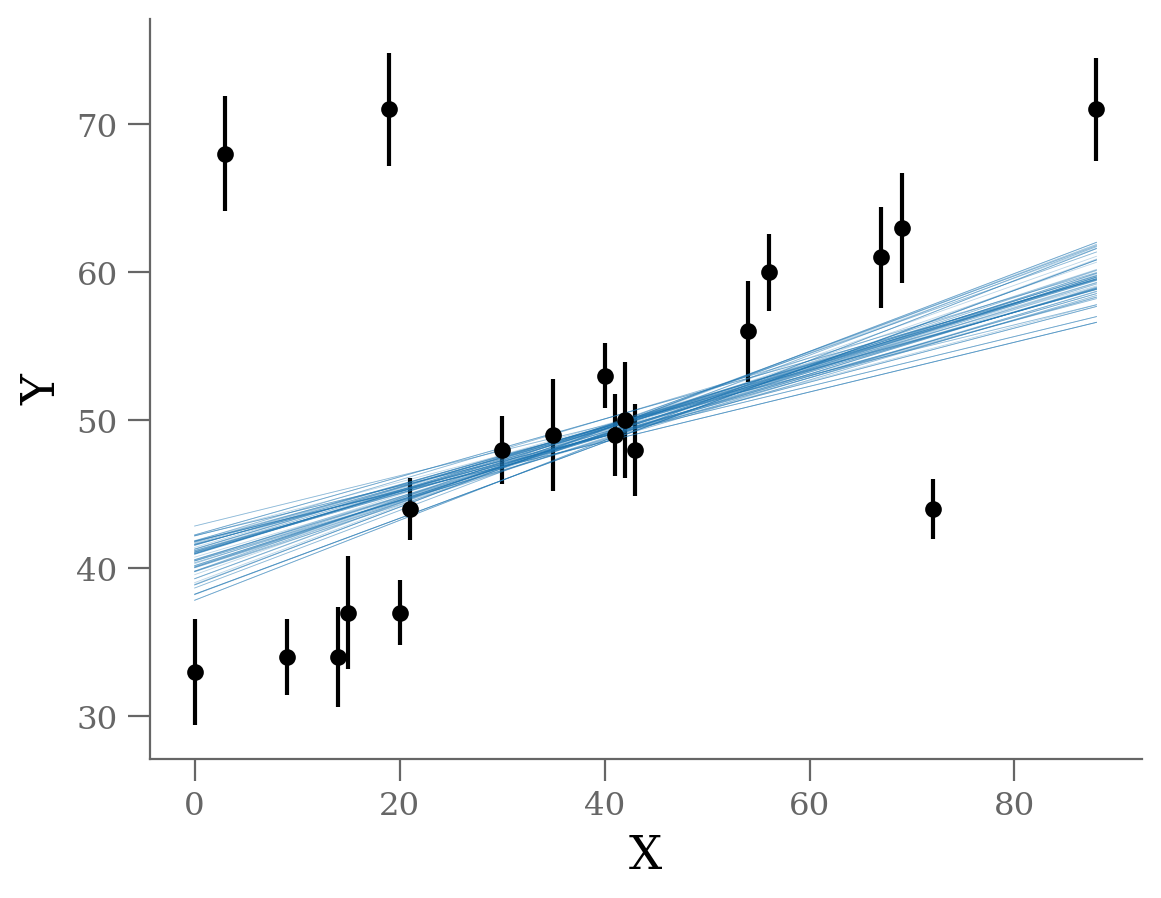

Let’s start by looking at the data.

Generate dataset: Straight line with outliers#

#@title Generate data { display-mode: "form" }

x = np.array([ 0, 3, 9, 14, 15, 19, 20, 21, 30, 35,

40, 41, 42, 43, 54, 56, 67, 69, 72, 88])

y = np.array([33, 68, 34, 34, 37, 71, 37, 44, 48, 49,

53, 49, 50, 48, 56, 60, 61, 63, 44, 71])

sy = np.array([ 3.6, 3.9, 2.6, 3.4, 3.8, 3.8, 2.2, 2.1, 2.3, 3.8,

2.2, 2.8, 3.9, 3.1, 3.4, 2.6, 3.4, 3.7, 2.0, 3.5])

np.savetxt('line_outlier.dat', np.array([x, y, sy]).T)

x, y, sy = np.loadtxt('line_outlier.dat', unpack=True)

plt.errorbar(x, y, yerr=sy, marker='o', ls='None', ms=5, color='k');

plt.xlabel('X');

plt.ylabel('Y');

We see three outliers, but there is an obvious linear relation between the points overall.

Blind fit: no outlier model#

Let’s see what happens if we fit the data without any outlier consideration.

Equations#

We would like to fit a linear model to this above dataset:

We commonly start with the Bayes’s rule:

Hence we need to define our prior and likelihood

Given this model and the gaussian uncertainties on our data, we can compute a Gaussian likelihood for each point: $\( P(x_i,y_i,~|~\alpha, \beta, \sigma_i) = \frac{1}{\sqrt{2\pi\sigma_i^2}} \exp\left[-\frac{1}{2\sigma_i^2}\left(y_i - {y}(x_i~|~\alpha, \beta)\right)^2\right] \)\( Note that \)\sigma_i\( is on the right hand side of the \)\mid$. It is because we assume given the Gaussian uncertainties when we write the likelihood that way.

The total likelihood is the product of all the individual likelihoods (as we assume independent measurements).

For numerical stability reasons, it is preferable to take the log-likelihood of the data. We have: $\( \log P(\{x_i, y_i\}~|~\alpha, \beta, \{\sigma_i\}) = \mathrm{const} - \sum_i \frac{1}{2\sigma_i^2}\left(y_i - y(x_i~|~\alpha, \beta)\right)^2 \)$

The posterior is the likelihood times the prior distributions. Let’s assume from the inspection of the data that \(\alpha > 0\), we can write $\(P(\alpha) = Uniform[0, 1000].\)$ (The upper value is abitrary.)

Coding#

def lnp(theta: np.array,

x: np.array,

y: np.array,

sy: np.array) -> float:

"""log-likelihood"""

dy = y - theta[0] - theta[1] * x

logL = -0.5 * np.log(2 * np.pi * sy ** 2) - 0.5 * ( dy / sy) ** 2

return np.sum(logL)

def lnposterior(theta: np.array,

x: np.array,

y: np.array,

sy: np.array) -> float:

"""log-posterior"""

if theta[1] < 0 or theta[1] > 1000:

return -np.inf

return lnp(theta, x, y, sy)

We’ll use the emcee package to explore the parameter space. This has the advantage of being more efficient than a Metropolis algorithm, and in particular it is less sensitive to the tuning parameters. Many other methods or sampling techniques would be as valid. We will explore other implementations in subsequent notebooks.

ndim = 2

nwalkers = 50

nburn = 2000

nsteps = nburn + 300

guess = (30, 1.)

starting_guess = np.random.normal(guess, 0.2, (nwalkers, ndim))

import emcee

sampler = emcee.EnsembleSampler(nwalkers, ndim, lnposterior, args=[x, y, sy], threads=4)

sampler.run_mcmc(starting_guess, nsteps, progress=True)

# we extract the samples corresponding to the after burning phase

sample = sampler.chain

sample = sampler.chain[:, nburn:, :].reshape(-1, ndim)

0%| | 0/2300 [00:00<?, ?it/s]

4%|▍ | 87/2300 [00:00<00:02, 869.62it/s]

8%|▊ | 174/2300 [00:00<00:02, 869.08it/s]

11%|█▏ | 261/2300 [00:00<00:02, 866.17it/s]

15%|█▌ | 348/2300 [00:00<00:02, 857.78it/s]

19%|█▉ | 434/2300 [00:00<00:02, 847.81it/s]

23%|██▎ | 520/2300 [00:00<00:02, 851.64it/s]

26%|██▋ | 607/2300 [00:00<00:01, 855.48it/s]

30%|███ | 694/2300 [00:00<00:01, 858.98it/s]

34%|███▍ | 781/2300 [00:00<00:01, 859.03it/s]

38%|███▊ | 868/2300 [00:01<00:01, 860.43it/s]

42%|████▏ | 955/2300 [00:01<00:01, 861.81it/s]

45%|████▌ | 1042/2300 [00:01<00:01, 859.41it/s]

49%|████▉ | 1129/2300 [00:01<00:01, 861.34it/s]

53%|█████▎ | 1216/2300 [00:01<00:01, 862.86it/s]

57%|█████▋ | 1303/2300 [00:01<00:01, 862.65it/s]

60%|██████ | 1390/2300 [00:01<00:01, 859.84it/s]

64%|██████▍ | 1477/2300 [00:01<00:00, 862.72it/s]

68%|██████▊ | 1564/2300 [00:01<00:00, 863.34it/s]

72%|███████▏ | 1651/2300 [00:01<00:00, 865.21it/s]

76%|███████▌ | 1738/2300 [00:02<00:00, 865.72it/s]

79%|███████▉ | 1825/2300 [00:02<00:00, 864.35it/s]

83%|████████▎ | 1912/2300 [00:02<00:00, 860.39it/s]

87%|████████▋ | 1999/2300 [00:02<00:00, 859.33it/s]

91%|█████████ | 2085/2300 [00:02<00:00, 857.08it/s]

94%|█████████▍| 2171/2300 [00:02<00:00, 845.75it/s]

98%|█████████▊| 2258/2300 [00:02<00:00, 851.04it/s]

100%|██████████| 2300/2300 [00:02<00:00, 858.77it/s]

sampler.chain.shape, sample.shape

((50, 2300, 2), (15000, 2))

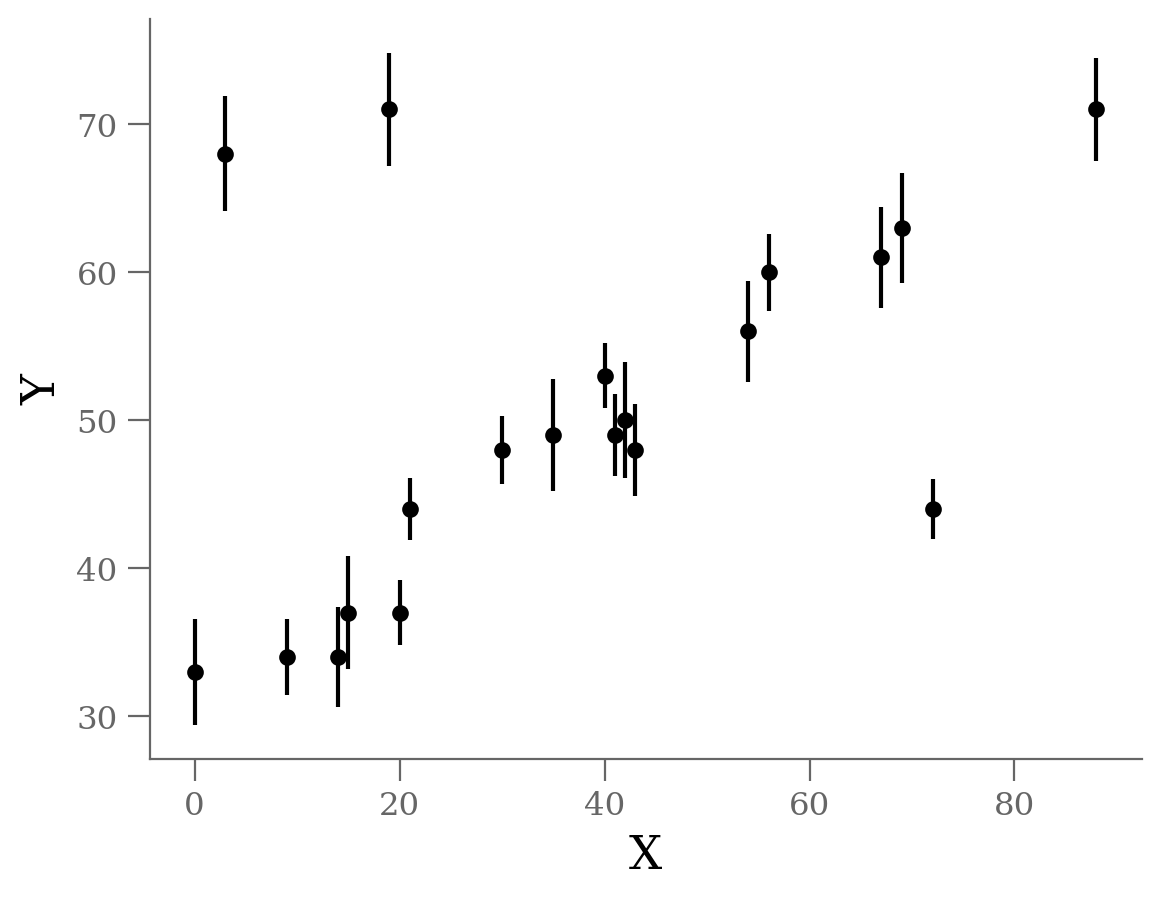

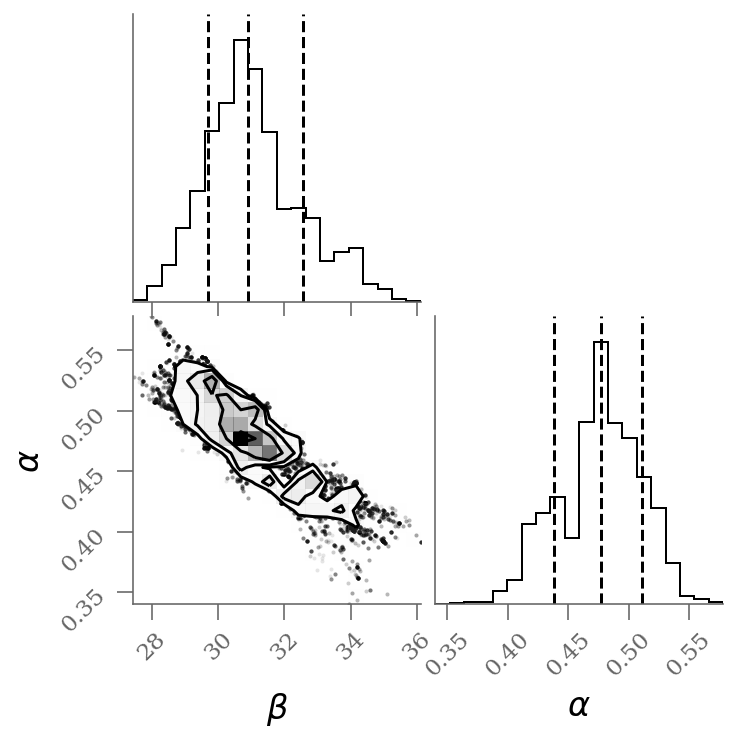

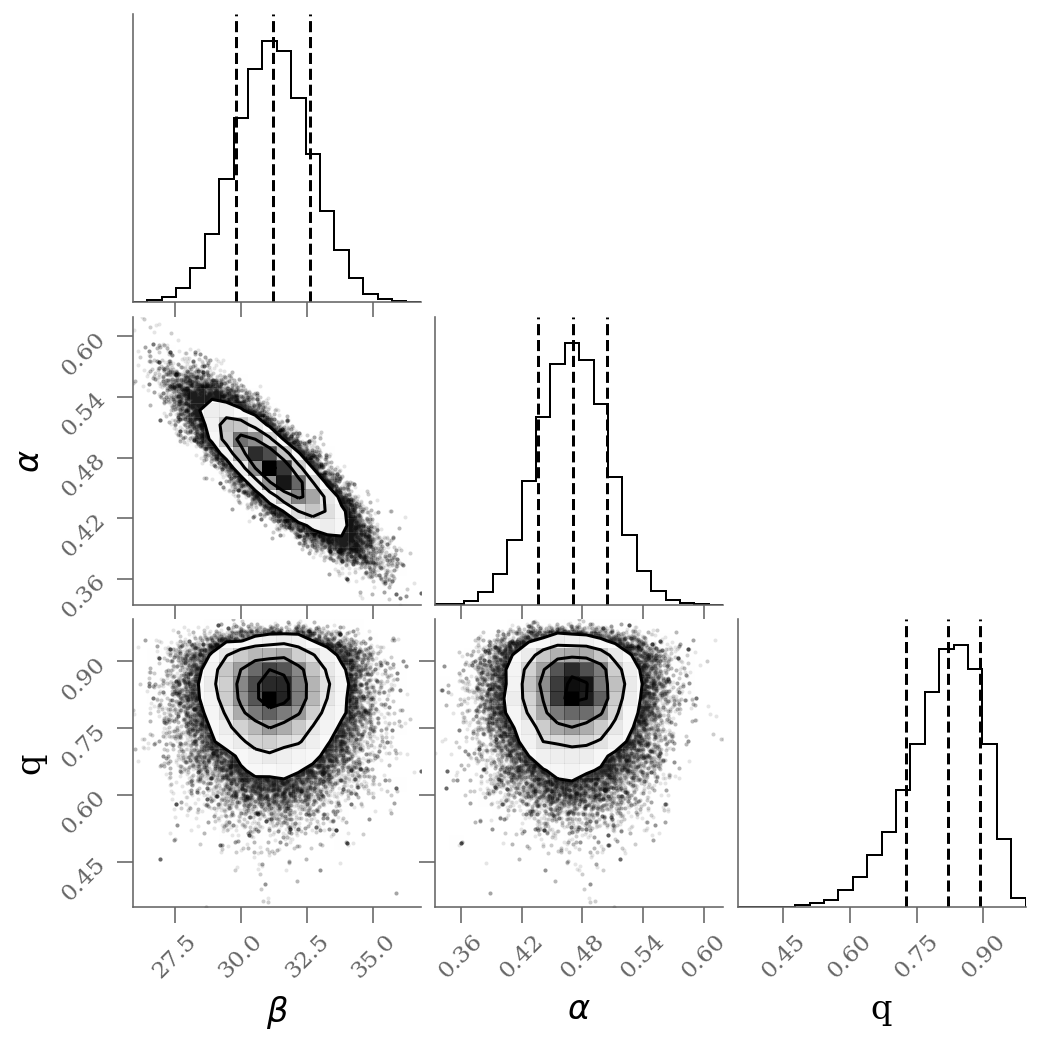

corner(sample, labels=(r'$\beta$', r'$\alpha$'), quantiles=(0.16, 0.50, 0.84));

x, y, sy = np.loadtxt('line_outlier.dat', unpack=True)

plt.errorbar(x, y, yerr=sy, marker='o', ls='None', ms=5, color='k');

plt.xlabel('X');

plt.ylabel('Y');

ypred_blind = sample[:, 0] + sample[:, 1] * x[:, None]

plt.plot(x, ypred_blind[:, :100], color='C0', rasterized=True, alpha=0.3, lw=0.3)

percs_blind = np.percentile(sample, [16, 50, 84], axis=0)

display(Markdown(r"""Without outlier modeling:

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

""".format(*percs_blind.T.ravel())

))

Without outlier modeling:

$\alpha$ = 39.7 [38.5, 40.9]

$\beta$ = 0.238 [0.21, 0.265]

It’s clear from this plot that the outliers exerts a disproportionate influence on the fit.

This is due to the nature of our likelihood function. One outlier that is, say \(10-\sigma\) (standard deviations) away from the fit will out-weight the contribution of points which are only \(2-\sigma\) away.

In conclusion, least-square likelihoods are overly sensitive to outliers, and this is causing issues with our fit.

One way to address this is to simply model the outliers.

Mixture Model#

The Bayesian approach to accounting for outliers generally involves mixture models so that the initial model is combined with a complement model accounting for the outliers:

where \(q\) is the mixing coefficient (between 0 and 1), \(P_{in}\) and \(P_{out}\) the probabilities of being an inlier and outlier, respectively.

So let’s propose a more complicated model that is a mixture between a signal and a background

Graphical model#

A short parenthesis on probabilistic programming. One way to represent the above model is through probabilistic graphical models (pgm).

Using daft a python package, I draw a (inaccurate) pgm of the straight line model with outliers.

import daft

# Instantiate the PGM.

pgm = daft.PGM([4, 4], origin=[-0.5, -0.0], grid_unit=4,

node_unit=2, label_params=dict(fontsize='x-large'))

# Hierarchical parameters.

pgm.add_node(daft.Node("sout", r"$\sigma_{out}$", 0.9, 3.5, fixed=False))

pgm.add_node(daft.Node("g", r"$g$", 0.2, 3.5, fixed=False))

pgm.add_node(daft.Node("beta", r"$\beta$", 2.4, 3.5))

pgm.add_node(daft.Node("alpha", r"$\alpha$", 1.6, 3.5))

# Latent variable.

pgm.add_node(daft.Node("x", r"$x_i$", 1, 1))

pgm.add_node(daft.Node("gn", r"$g_i$", 0.2, 2, observed=False))

pgm.add_node(daft.Node("yin", r"$\hat{y}_{in}$", 2.5, 2, observed=False))

pgm.add_node(daft.Node("yout", r"$\hat{y}_{out}$", 1.5, 2, observed=False))

# Data.

pgm.add_node(daft.Node("y", r"$y_i$", 2, 1, observed=True))

# And a plate.

pgm.add_plate(daft.Plate([-0.2, 0.5, 3.2, 2.2],

label=r"$i = 1, \cdots, N$", shift=-0.1,

bbox={"color": "none"}))

# add relations

pgm.add_edge("x", "y")

pgm.add_edge("alpha", "yin")

pgm.add_edge("beta", "yin")

pgm.add_edge("alpha", "yout")

pgm.add_edge("beta", "yout")

pgm.add_edge("sout", "yout")

pgm.add_edge("g", "gn")

pgm.add_edge("gn", "y")

pgm.add_edge("yin", "y")

pgm.add_edge("yout", "y")

pgm.render();

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[12], line 1

----> 1 import daft

3 # Instantiate the PGM.

4 pgm = daft.PGM([4, 4], origin=[-0.5, -0.0], grid_unit=4,

5 node_unit=2, label_params=dict(fontsize='x-large'))

ModuleNotFoundError: No module named 'daft'

There are multiple ways to define this mixture modeling.

Brutal version: 1 parameter per datapoint#

\(P_{in, i}\) corresponds to the previous likelihood.

\(P_{out, i}\) is arbitrary as we do not really have information about the causes for the outliers. We assume a similar Gaussian form centered on the affine relation but with a significantly larger dispersion. Doing so is intuitively considering the distance to the line to decide whether we have an outlier or not (this is intuitively a Bayesian version of sigma-clipping)

It is important to note that the total likelihood is a product of sums:

$\( P = \prod_i P_i = \prod_i \left(q_i \cdot P_{in, i} + (1 - q_i) \cdot P_{out, i}\right).\)\(

Hence, we need to be careful to properly transform the \)\ln P\( into \)P$ during the calculations (see: np.logsumexp)

Hence the likelihood becomes explicitly $\( \begin{array}{ll} P(\{x_i\}, \{y_i\}~|~\theta,\{q_i\},\{\sigma_i\}, \sigma_{out}) = & \frac{q_i}{\sqrt{2\pi \sigma_i^2}}\exp\left[\frac{-\left(\hat{y}(x_i~|~\theta) - y_i\right)^2}{2\sigma_i^2}\right] \\ &+ \frac{1 - q_i}{\sqrt{2\pi \sigma_{out}^2}}\exp\left[\frac{-\left(\hat{y}(x_i~|~\theta) - y_i\right)^2}{2\sigma_{out}^2}\right]. \end{array} \)$

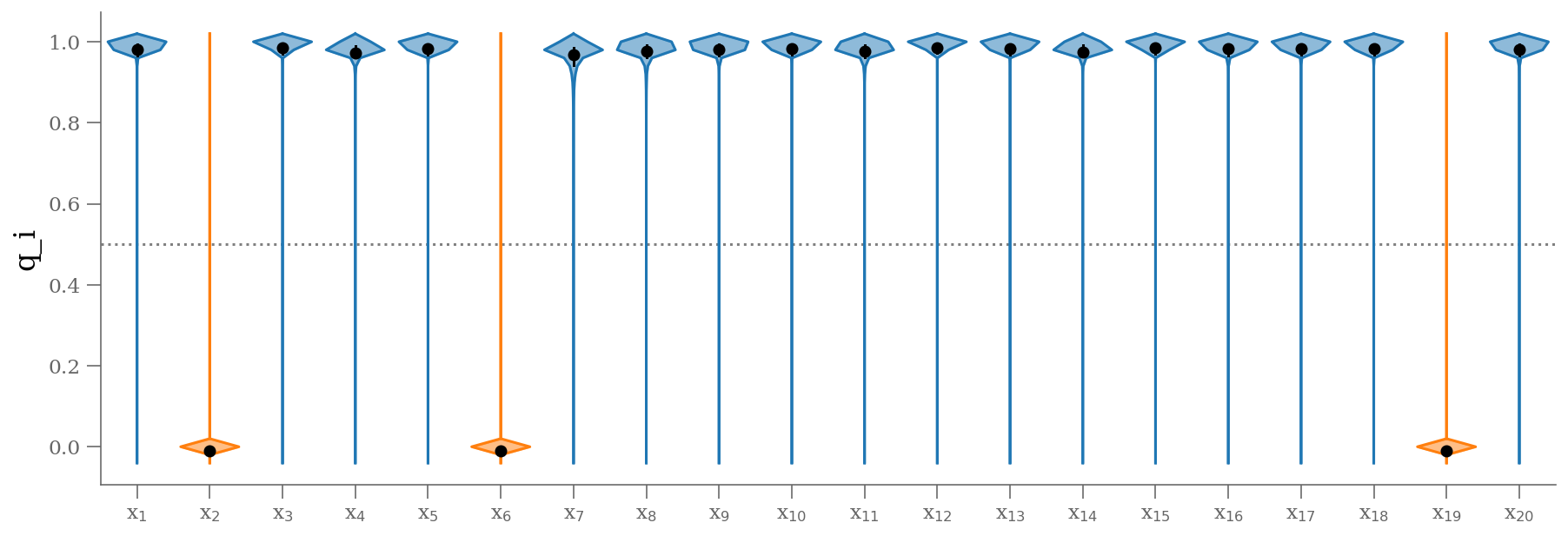

We “simply” expanded our model with \(20\) nuisance parameters: \(\{q_i\}\) is a series of weights, which range from 0 to 1 and encode for each point \(i\) the degree to which it fits the model.

\(q_i=0\) indicates an outlier, in which case a Gaussian of width \(\sigma_{out}\) is used in the computation of the likelihood. This \(\sigma_{out}\) can also be a nuisance parameter. However, for this example, we fix \(\sigma_{out}\) to an arbitrary large value (large compared with the dispersion of the data)

Note that we have a prior that all \(q_i\) must be strictly between 0 and 1.

def lnp(theta: np.array,

x: np.array,

y: np.array,

sy: np.array) -> float:

"""log-likelihood"""

dy = y - theta[0] - theta[1] * x

logL = -0.5 * np.log(2 * np.pi * sy ** 2) - 0.5 * ( dy / sy) ** 2

return logL

def lnposterior(theta: np.array,

x: np.array,

y: np.array,

sy: np.array) -> float:

"""log-posterior"""

beta, alpha = theta[:2]

q = theta[2:]

if alpha < 0:

return -np.inf

if (np.any(q > 1) | np.any(q < 0)):

return -np.inf

sout = 100

lnp_in = lnp(theta, x, y, sy)

lnp_out = lnp(theta, x, y, sout)

lntot = np.logaddexp(np.log(q) + lnp_in, np.log(1-q) + lnp_out)

return lntot.sum()

ndim = 2 + len(x)

nwalkers = 48

nburn = 20_000

nsteps = nburn + 1_000

guess = (30, 0.45)

starting_guess = np.zeros((nwalkers, ndim))

starting_guess[:, :2] = np.random.normal(guess, 0.1, (nwalkers, 2))

starting_guess[:, 2:] = np.random.normal(0.5, 0.1, (nwalkers, len(x)))

import emcee

sampler = emcee.EnsembleSampler(nwalkers, ndim, lnposterior, args=[x, y, sy], threads=4)

sampler.run_mcmc(starting_guess, nsteps, progress=True)

sample = sampler.chain

sample = sampler.chain[:, nburn:, :].reshape(-1, ndim)

100%|██████████| 21000/21000 [01:05<00:00, 318.78it/s]

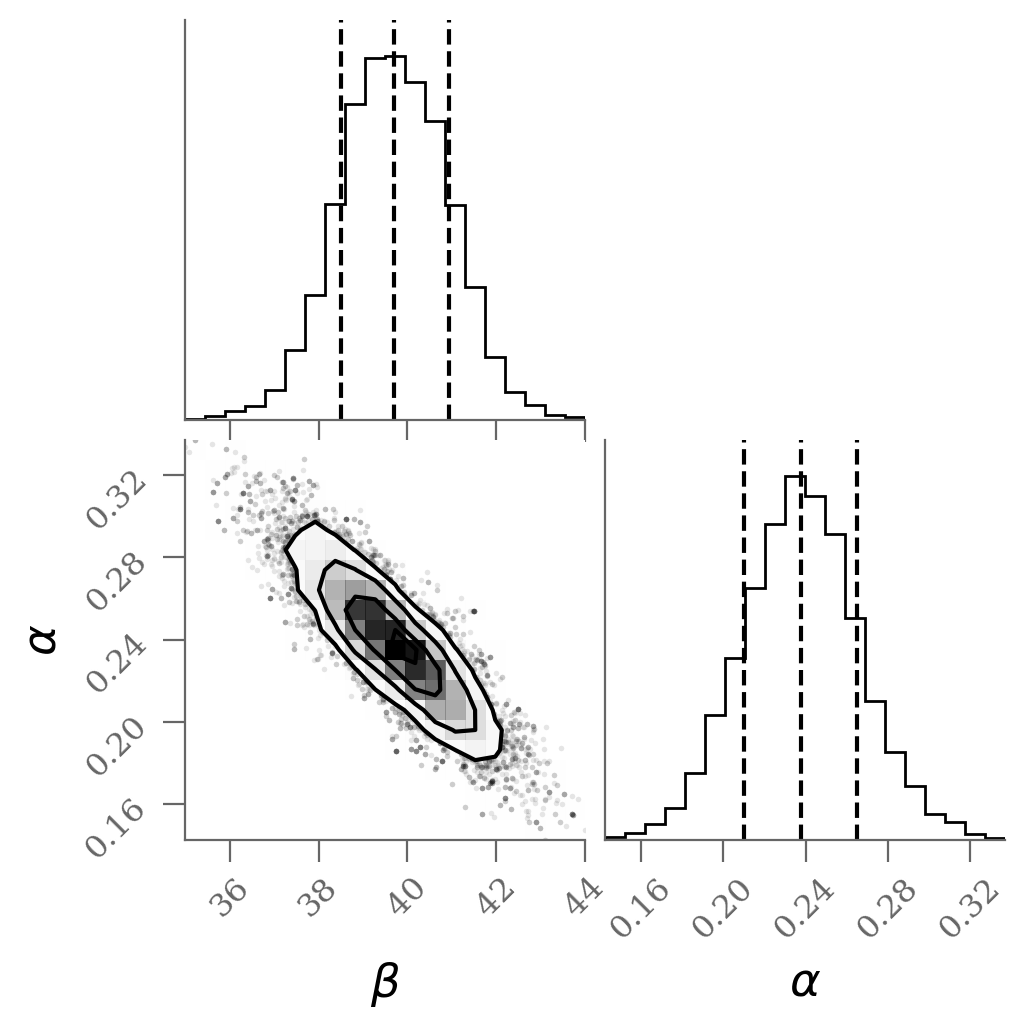

corner(sample[:, :2], labels=(r'$\beta$', r'$\alpha$'), quantiles=(0.16, 0.50, 0.84));

We see a distribution of points near a slope of \(\sim 0.45\), and an intercept of \(\sim 31\). We’ll plot this model over the data below, but first let’s see what other information we can extract from this trace.

One nice feature of analyzing MCMC samples is that the choice of nuisance parameters is completely irrelevant during the sampling.

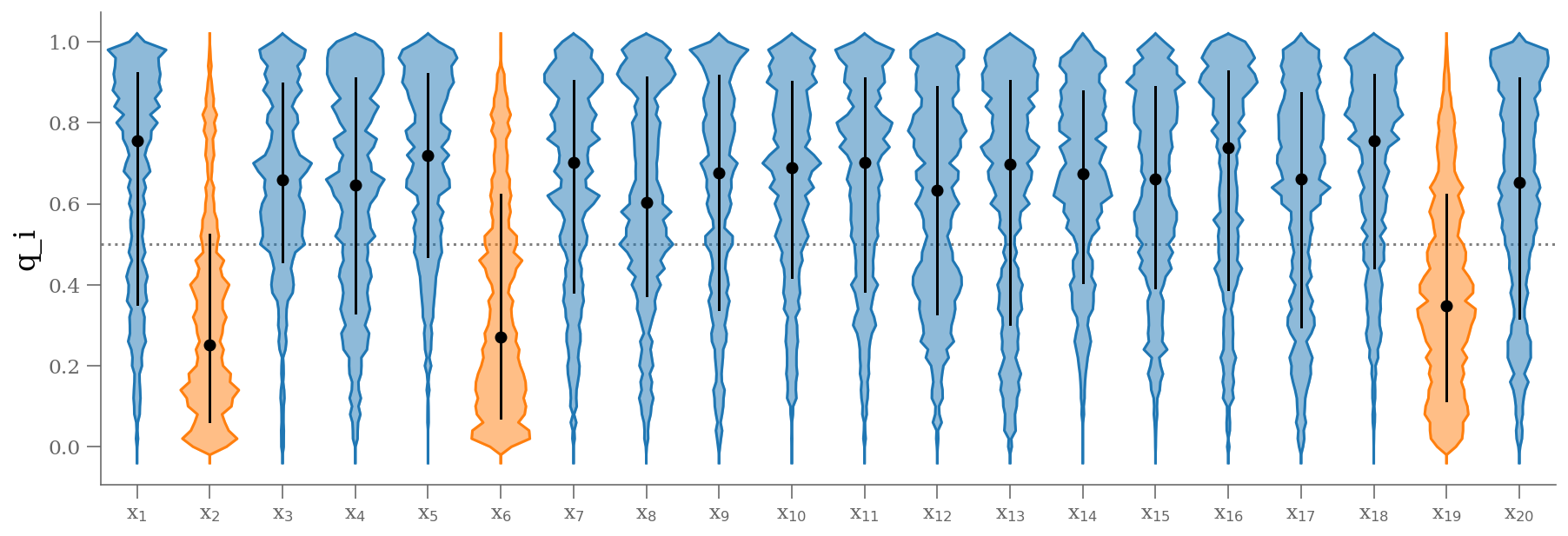

samples_q = sample[:, 2:]

plt.figure(figsize=(15, 5))

colors = np.where(np.median(samples_q, axis=0) < 0.5, 'C1', 'C0')

fast_violin(samples_q, color=colors, bins=np.arange(-0.05, 1.05, 0.02))

ticks = (np.arange(samples_q.shape[1]) + 1)

lbls = [r'x$_{{{0:d}}}$'.format(k) for k in (ticks).astype(int)]

plt.xticks(ticks, lbls)

plt.hlines([0.5], 0.5, len(ticks) + 0.5, color='0.5', zorder=-100, ls=':')

plt.xlim(0.5, len(ticks) + 0.5)

plt.ylabel('q_i');

q = np.median(sample[:, 2:], 0)

outliers = (q <= 0.5) # arbitrary choice

n_outliers = sum(outliers)

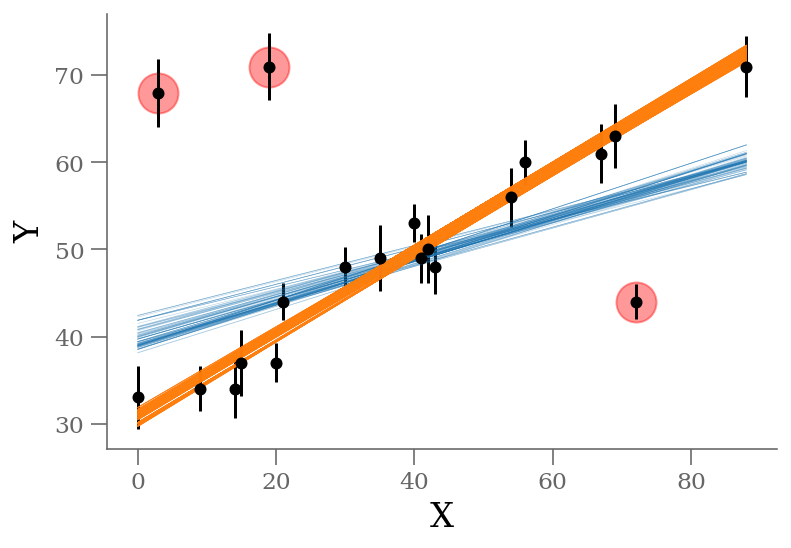

x, y, sy = np.loadtxt('line_outlier.dat', unpack=True)

plt.errorbar(x, y, yerr=sy, marker='o', ls='None', ms=5, color='k');

ypred_brute = sample[:, 0] + sample[:, 1] * x[:, None]

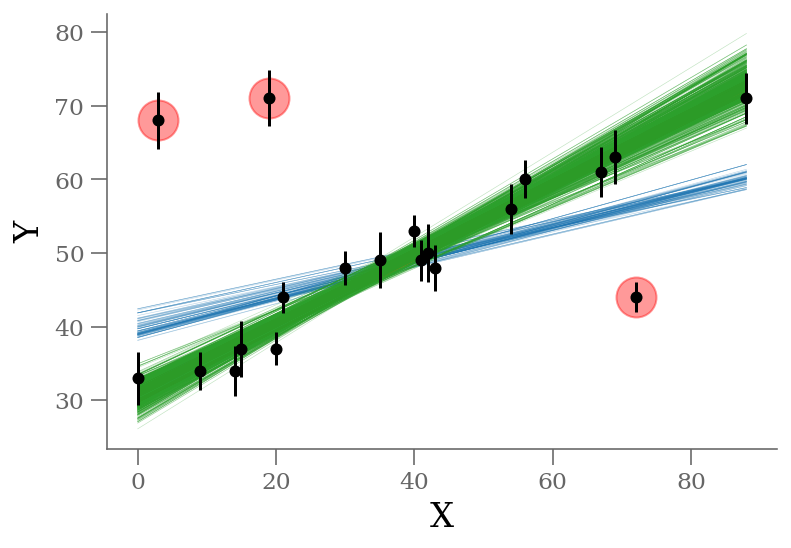

plt.plot(x, ypred_blind[:, :100], color='C0', rasterized=True, alpha=0.3, lw=0.3)

plt.plot(x, ypred_brute[:, :1000], color='C1', rasterized=True, alpha=0.3, lw=0.3)

plt.plot(x[outliers], y[outliers], 'ro',

ms=20, mec='red', mfc='red', zorder=1, alpha=0.4)

plt.xlabel('X');

plt.ylabel('Y');

display(Markdown(r"""Without outlier modeling:

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

""".format(*percs_blind.T.ravel())

))

percs_brute = np.percentile(sample[:, :2], [16, 50, 84], axis=0)

display(Markdown(r"""With outlier modeling: 22 parameters

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

* number of outliers: ${6:d}$

""".format(*percs_brute.T.ravel(), n_outliers)

))

Without outlier modeling:

$\alpha$ = 39.8 [38.5, 41]

$\beta$ = 0.234 [0.208, 0.263]

With outlier modeling: 22 parameters

$\alpha$ = 30.9 [29.7, 32.6]

$\beta$ = 0.477 [0.439, 0.511]

number of outliers: $3$

The result shown in orange matches our intuition. In addition, the points automatically identified as outliers are the ones we would identify by eye to be suspicious. The blue shaded region indicates the previous “blind” result.

The smarter version#

This previous model of outliers takes a simple linear model of \(2\) parameters and transforms it into a \((N+2)\) parameters, \(N\) being the number of datapoints. This leads to \(22\) parameters in our case. What happens if you have \(200\) data points?

The problem with the previous model was that it adds one parameter for each data point, which not only makes the fitting expensive, but also makes the problem untracktable very quickly.

Based on the same formulation, we can consider one global \(q\) instead individuals, which will characterize on the ensemble the probability of having an outlier. In other words, the fraction of outliers relative to the dataset.

with

We simply have \(1\) nuisance parameters: \(q\) which ranges from 0 to 1.

Similarly to the previous model, \(q=0\) indicates an outlier, in which case a Gaussian of width \(\sigma_B\) is used in the computation of the likelihood. This \(\sigma_B\) can also be a nuisance parameter.

From this model, we can estimate the odds of being an outlier with $\( Odds_{outlier}(x_i, y_i, \sigma_i) = \frac{(1-q) P(x_i, y_i | \sigma_{out})}{q\,P(x_i, y_i | \sigma_i, \alpha, \beta) + (1-q) P(x_i, y_i | \sigma_{out})}\)$

def lnp(theta, x, y, sy):

"""log-likelihood"""

dy = y - theta[0] - theta[1] * x

logL = -0.5 * np.log(2 * np.pi * sy ** 2) - 0.5 * ( dy / sy) ** 2

return logL

def lnposterior(theta, x, y, sy):

"""posterior"""

beta, alpha = theta[:2]

q = float(theta[2])

if (np.any(q > 1) | np.any(q < 0)):

return -np.inf

# q<0 or q>1 leads to NaNs in logarithm

q = np.clip(q, 1e-4, 1-1e-4)

if alpha < 0:

return -np.inf

sout = 100

lnp_in = lnp(theta, x, y, sy)

lnp_out = lnp(theta, x, y, sout)

lntot = np.logaddexp(np.log(q) + lnp_in, np.log(1-q) + lnp_out)

return lntot.sum()

ndim = 3

nwalkers = 100

nburn = 2_000

nsteps = nburn + 1000

guess = (30, 0.45, 0.5)

starting_guess = np.random.normal(guess, 0.1, (nwalkers, ndim))

import emcee

sampler = emcee.EnsembleSampler(nwalkers, ndim, lnposterior, args=[x, y, sy], threads=4)

sampler.run_mcmc(starting_guess, nsteps, progress=True)

sample = sampler.chain

sample = sampler.chain[:, nburn:, :].reshape(-1, ndim)

100%|██████████| 3000/3000 [00:27<00:00, 109.20it/s]

corner(sample, labels=(r'$\beta$', r'$\alpha$', r'q'), quantiles=(0.16, 0.50, 0.84));

def odds_outlier_full(theta, x, y, sy):

""" Odds of being an outlier

"""

beta, alpha = theta[:2]

q = float(theta[2])

if (np.any(q > 1) | np.any(q < 0)):

return 0

# q<0 or q>1 leads to NaNs in logarithm

q = np.clip(q, 1e-4, 1-1e-4)

if alpha < 0:

return 0

sout = 100

lnp_in = lnp(theta, x, y, sy)

lnp_out = lnp(theta, x, y, sout)

lntot = np.logaddexp(np.log(q) + lnp_in, np.log(1-q) + lnp_out)

return np.exp(np.log(1-q) + lnp_out) / np.exp(lntot)

samples_q = 1 - np.array([odds_outlier_full(sk, x, y, sy) for sk in sample])

plt.figure(figsize=(15, 5))

colors = np.where(np.median(samples_q, axis=0) < 0.5, 'C1', 'C0')

fast_violin(samples_q, color=colors, bins=np.arange(-0.05, 1.05, 0.02))

ticks = (np.arange(samples_q.shape[1]) + 1)

lbls = [r'x$_{{{0:d}}}$'.format(k) for k in (ticks).astype(int)]

plt.xticks(ticks, lbls)

plt.hlines([0.5], 0.5, len(ticks) + 0.5, color='0.5', zorder=-100, ls=':')

plt.xlim(0.5, len(ticks) + 0.5)

plt.ylabel('q_i');

outliers = odds_outlier_full(np.median(sample, axis=0), x, y, sy) >= 0.5

n_outliers = sum(outliers)

x, y, sy = np.loadtxt('line_outlier.dat', unpack=True)

plt.errorbar(x, y, yerr=sy, marker='o', ls='None', ms=5, color='k');

ypred_smart = sample[:, 0] + sample[:, 1] * x[:, None]

plt.plot(x, ypred_blind[:, :100], color='C0',

rasterized=True, alpha=0.3, lw=0.3, zorder=-3)

plt.plot(x, ypred_brute[:, :1000], color='C1',

rasterized=True, alpha=0.3, lw=0.3, zorder=-2)

plt.plot(x, ypred_smart[:, :1000], color='C2',

rasterized=True, alpha=0.3, lw=0.3, zorder=-1)

plt.plot(x[outliers], y[outliers], 'ro',

ms=20, mec='red', mfc='red', zorder=1, alpha=0.4)

plt.xlabel('X');

plt.ylabel('Y');

display(Markdown(r"""Without outlier modeling (blue):

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

""".format(*percs_blind.T.ravel())

))

display(Markdown(r"""With outlier modeling (orange): 22 parameters

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

* number of outliers: ${6:d}$

""".format(*percs_brute.T.ravel(), n_outliers)

))

percs_smart = np.percentile(sample, [16, 50, 84], axis=0)

display(Markdown(r"""With outlier modeling (green): 3 parameters

* $\alpha$ = {1:0.3g} [{0:0.3g}, {2:0.3g}]

* $\beta$ = {4:0.3g} [{3:0.3g}, {5:0.3g}]

* $q$ = {7:0.3g} [{6:0.3g}, {8:0.3g}]

* number of outliers: ${9:d}$

""".format(*percs_smart.T.ravel(), n_outliers)

))

Without outlier modeling (blue):

$\alpha$ = 39.8 [38.5, 41]

$\beta$ = 0.234 [0.208, 0.263]

With outlier modeling (orange): 22 parameters

$\alpha$ = 30.9 [29.7, 32.6]

$\beta$ = 0.477 [0.439, 0.511]

number of outliers: $3$

With outlier modeling (green): 3 parameters

$\alpha$ = 31.2 [29.8, 32.6]

$\beta$ = 0.471 [0.436, 0.504]

$q$ = 0.821 [0.726, 0.893]

number of outliers: $3$

The infered parameters are nearly identical but the convergence is much faster in the last approach.